- Hardware and software are in a constant, often adversarial negotiation for optimal performance.

- Co-design, where hardware is built specifically for software (and vice-versa), is the new frontier for efficiency.

- Operating systems, drivers, and firmware are critical, often overlooked, translators in this complex relationship.

- Understanding their dynamic tension reveals why some devices excel and others falter, even with similar specs.

The Invisible Architects: How Hardware and Software Co-Evolved

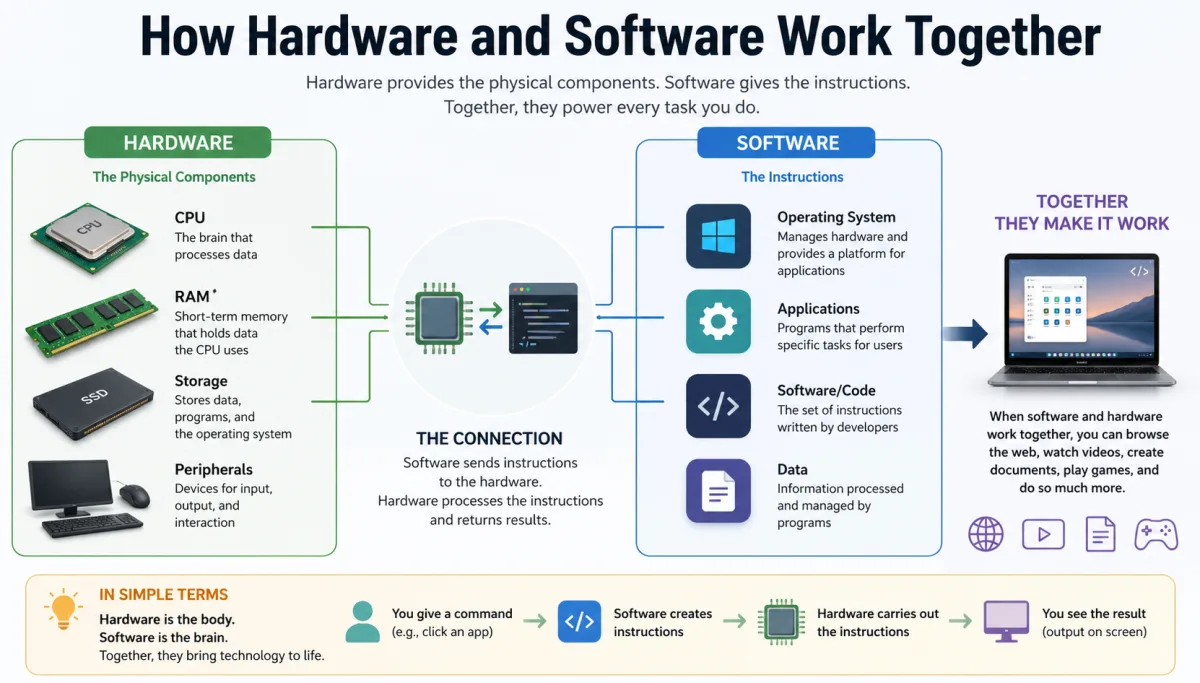

The popular narrative suggests that hardware and software are like a brain and a mind – distinct but interdependent. But here's the thing: their relationship is far more intimate, a relentless dance of pushing boundaries and setting limitations. From the very first computers, the capabilities of the physical machine directly dictated what programs could run, and conversely, ambitious software ideas spurred new hardware innovations. It's a feedback loop that has defined technological progress, often through inherent tension. You see it in early mainframes, where every line of code was meticulously crafted to conserve precious, limited memory and processing cycles. Engineers weren't just writing programs; they were fundamentally designing an interaction model for how hardware and software work together at the most elemental level.From Punch Cards to Processors: Early Symbiosis

Before graphical interfaces and gigabytes, computers like the ENIAC in 1946 required physical rewiring for different tasks. This was hardware as software, and software as hardware. The advent of stored-program computers, like the EDSAC in 1949, was a revelation. Suddenly, instructions could be changed without physical alteration, making software a flexible entity. Yet, software was still deeply constrained by the hardware's architecture, its instruction set, and its limited resources. Every byte mattered. For instance, early operating systems like IBM's OS/360, introduced in 1964, were massive undertakings precisely because they had to manage incredibly diverse hardware configurations, a testament to the early challenges of abstracting the machine.The Rise of Abstraction Layers

As hardware grew more complex, the need for abstraction layers became paramount. Operating systems emerged as sophisticated managers, insulating software developers from the gritty details of individual components. This allowed programmers to write applications that could run on a wider range of machines, fostering an explosion of software innovation. However, this abstraction came at a cost: a layer of inefficiency. Every call to the hardware had to pass through the OS, adding latency. It's a trade-off still debated today, particularly in high-performance computing and real-time systems where direct hardware access remains king for achieving peak speeds. The intricate dance of hardware-software synergy became an art form.The OS as Orchestrator: Bridging the Divide

Your operating system – Windows, macOS, Linux, Android, iOS – isn't just a pretty interface; it's the primary broker in the ceaseless negotiations between hardware and software. Think of it as the air traffic controller for your device's internal components. When you click an icon, the OS translates that request into a series of commands the CPU can understand, allocates memory, manages storage, and orchestrates interactions with peripherals like your display or printer. This is where the core functionality of how hardware and software work together is truly defined. Without this sophisticated layer of management, every application would need to contain its own drivers and resource allocators, leading to an incredibly inefficient and unstable computing environment. Consider Microsoft Windows, which, since its early versions, has evolved to manage an astonishing array of hardware. When a new graphics card or a USB device is plugged in, it's the OS's job to identify it, load the correct drivers, and make it available to applications. This process isn't always seamless, as anyone who's ever wrestled with an outdated driver can attest. The OS acts as a gatekeeper, ensuring that applications play by the rules and don't directly conflict with each other or hog resources. For example, when you run multiple demanding applications, Windows' task scheduler decides which process gets CPU time and when, effectively juggling the demands of various software on the finite resources of the underlying hardware. This complex operating system functionality is invisible but vital.Drivers and Firmware: The Unsung Translators

If the operating system is the general contractor, then drivers and firmware are the specialized foremen, speaking the precise language of individual hardware components. These aren't just minor utilities; they are mission-critical pieces of software embedded within or closely tied to your hardware. Firmware, like the Unified Extensible Firmware Interface (UEFI) in modern PCs, is essentially low-level software that lives directly on the hardware itself, giving it instructions on how to boot up, communicate with basic components, and hand off control to the operating system. It's the very first software that runs when you power on your computer, a testament to its foundational role in how hardware and software work together. Drivers, on the other hand, are software interfaces that allow the OS and applications to interact with specific hardware devices. Take your graphics card, for instance. A game doesn't directly tell the GPU to draw a specific texture; it sends commands through the graphics API (like DirectX or OpenGL), which then passes them to the GPU driver. The driver, written by the GPU manufacturer (like Nvidia or AMD), translates these high-level commands into the specific instructions that particular GPU model understands. This intricate translation is vital. An outdated or buggy driver can lead to anything from graphical glitches and crashes to significant performance drops. In 2023, Nvidia released over a dozen driver updates for its RTX 40-series GPUs, each often bringing performance improvements or stability fixes for new game titles, underscoring the constant evolution of this critical hardware-software handshake.Performance Puzzles: When Software Outstrips Silicon (and vice-versa)

The relationship between hardware and software isn't always harmonious; it's often a tug-of-war. Sometimes, cutting-edge software demands more processing power, memory, or graphical capability than even the latest hardware can comfortably provide. Think about the launch of a graphically intensive AAA video game. Developers push game engines to their absolute limits, and even the most powerful gaming PCs can struggle to maintain high frame rates at maximum settings. This isn't necessarily a hardware failure; it's software aggressively exploiting every available resource, sometimes exposing the bottlenecks in the underlying silicon. This relentless push by software is a primary driver for hardware innovation, forcing chip manufacturers to constantly invent faster processors and more efficient architectures. For instance, a 2023 report by the U.S. Department of Energy highlighted that advancements in hardware-software co-optimization have contributed to a 15% improvement in data center energy efficiency over the past five years, directly impacting operational costs and environmental footprint. Conversely, poorly optimized software can cripple even the most robust hardware. A mobile app rife with memory leaks or inefficient code can make a brand-new smartphone feel sluggish. Developers often fail to optimize their code for specific processor architectures or operating system quirks, leading to wasted cycles and battery drain. Here's where it gets interesting: the perception of device speed often has more to do with software optimization than raw hardware power. A well-coded application running on mid-range hardware can feel snappier than a bloated, inefficient one on a top-tier machine. In fact, consistently clearing unnecessary files and caches can significantly improve your device's responsiveness, a direct result of reducing the software overhead that the hardware has to process. It illustrates just how intertwined device performance truly is.“The future of computing isn’t about making hardware faster or software smarter in isolation; it’s about their symbiotic evolution,” states Dr. A.P. Singh, Professor of Computer Science at Stanford University, in his 2022 research on heterogeneous computing architectures. “We're moving beyond simple compatibility to intentional co-design, where chips are built with specific software workloads in mind, yielding efficiency gains of up to 50% in specialized tasks like AI inference.”

The Integrated Future: Co-Design as the New Frontier

For decades, the tech industry operated largely on a modular approach: Intel made chips, Microsoft made operating systems, and PC manufacturers assembled them. But this era is rapidly giving way to a new paradigm: co-design. Major players like Apple, Google, and even Tesla are investing heavily in designing their own custom silicon, precisely because they recognize that true optimization – and competitive advantage – lies in building hardware and software together, from the ground up. This isn't just about making them compatible; it's about creating a unified ecosystem where every transistor and every line of code are meticulously crafted to complement each other, fundamentally changing how hardware and software work together.Apple's M-Series: A Case Study in Vertical Integration

Apple's transition from Intel processors to its in-house M-series chips, beginning with the M1 in 2020, stands as a prime example. This strategic shift, spearheaded by leaders like Johny Srouji, Apple's Senior Vice President of Hardware Technologies, didn't just aim for faster CPUs; it was about creating a System-on-a-Chip (SoC) that integrated the CPU, GPU, neural engine, and unified memory onto a single die, all optimized for macOS and Apple's software stack. This deep integration allowed for unprecedented efficiency and performance gains. For instance, the unified memory architecture means the CPU and GPU can access the same data pool without copying, drastically reducing latency and power consumption. This synergy allowed Apple to deliver professional-grade performance in fanless laptops, a feat previously thought impossible.Google's Tensor: AI at the Edge

Google followed a similar path with its Tensor chip, introduced in the Pixel 6 smartphone in 2021. While it includes standard CPU and GPU cores, the Tensor chip's standout feature is its custom Tensor Processing Unit (TPU), specifically designed to accelerate machine learning workloads. Google built this hardware not for general computing, but to supercharge its AI algorithms, enabling advanced features like real-time language translation, improved computational photography, and on-device voice recognition with remarkable speed and accuracy. This bespoke hardware allows Google's software to perform complex AI tasks locally on the device, enhancing privacy and reducing reliance on cloud servers, demonstrating a clear strategic choice to integrate hardware and software for a specific purpose.The Security Tightrope: Hardware-Software Interplay in Protection

Security isn't an afterthought; it's baked into the very fabric of how hardware and software work together. Every layer, from the silicon up to your browser, plays a role in protecting your data and privacy. However, this intricate interplay also creates vulnerabilities. The infamous Meltdown and Spectre vulnerabilities, discovered in 2018, illustrate this perfectly. These weren't software bugs in the traditional sense, but design flaws in the speculative execution features of modern CPUs. Software patches were developed to mitigate these hardware vulnerabilities, but often at the cost of performance, highlighting the delicate balance. Hardware often provides foundational security features that software then builds upon. Modern processors include features like Intel SGX (Software Guard Extensions) or ARM TrustZone, which create secure enclaves – isolated areas within the CPU where sensitive data and code can be processed, shielded even from the operating system. This hardware-level protection forms the bedrock for critical software functions like password management, digital rights management, and secure boot processes. But wait, security is a moving target. As new threats emerge, both hardware and software must evolve. Regular software updates aren't just about new features; they frequently contain critical security patches that address newly discovered vulnerabilities, often those arising from the complex interaction between the code and the underlying silicon. This is why keeping your devices updated can drastically improve their security posture.The User Experience Equation: More Than Just Specs

Ultimately, the success of how hardware and software work together is measured in the user experience. You don't care about clock speeds or instruction sets; you care if your app loads quickly, if your video plays smoothly, and if your device feels responsive. This perceived performance is a direct outcome of their deep synergy. A powerful CPU with a clunky, unoptimized operating system can feel slower than a less powerful one running highly efficient software. Think about the iPhone's legendary "smoothness" even on older hardware. A significant part of this comes from Apple's meticulous optimization of iOS to work hand-in-glove with its specific A-series chips, including how animations are rendered. For instance, the smoothness of user interface animations – scrolling, opening apps, transitioning between screens – is a critical factor in how "fast" a device feels. While raw GPU power is important, the software's ability to efficiently render these animations, schedule tasks, and manage memory plays an equally crucial role. If the software isn't optimized to leverage the hardware's capabilities for rendering, even a top-tier GPU can struggle to deliver a fluid experience. A study by Google's Material Design team in 2021 found that perceived performance, heavily influenced by animation fluidity, directly impacts user satisfaction and engagement by over 20%. This demonstrates that the feeling of speed isn't just about raw numbers; it's about the sophisticated ballet between the silicon and the code.| Processor | Architecture | Geekbench 6 Single-Core Score (avg.) | Geekbench 6 Multi-Core Score (avg.) | Typical TDP (Watts) | Release Year | Source |

|---|---|---|---|---|---|---|

| Intel Core i7-13700K | Hybrid (P+E cores) | 2800 | 17000 | 125 | 2022 | AnandTech (2023) |

| AMD Ryzen 9 7900X | Zen 4 | 2200 | 17500 | 170 | 2022 | Tom's Hardware (2023) |

| Apple M2 Max | ARM-based SoC | 2800 | 14900 | 34 | 2023 | Macworld (2023) |

| Qualcomm Snapdragon 8 Gen 2 | ARM-based SoC | 1900 | 5300 | ~10 (mobile) | 2022 | GSM Arena (2023) |

| Intel Core i5-1135G7 | Tiger Lake | 1600 | 5800 | 28 | 2020 | Notebookcheck (2021) |

Optimizing Device Performance: Practical Steps for Software-Hardware Harmony

- Keep Your Operating System Updated: Manufacturers frequently release performance and security patches that optimize the OS's interaction with underlying hardware.

- Update Drivers Regularly: Especially for critical components like graphics cards, sound cards, and network adapters, new drivers can unlock performance and fix bugs.

- Manage Background Processes: Limit unnecessary software running in the background, freeing up CPU cycles and RAM for the applications you're actively using.

- Clear Caches and Temporary Files: Over time, accumulated junk files can slow down storage access and overall system responsiveness.

- Choose Well-Optimized Software: Prioritize applications known for their efficiency and minimal resource footprint, especially on less powerful devices.

- Consider Hardware Upgrades Strategically: If software demands consistently outstrip your current hardware, target specific bottlenecks like RAM, SSD, or GPU.

"Global investment in custom silicon development by major tech firms surged by 45% between 2020 and 2022, signaling a decisive shift towards vertically integrated hardware-software ecosystems." – McKinsey & Company, 2023 Report on Semiconductor Trends.

The evidence is clear: the era of simply building faster hardware and expecting software to adapt is over. Performance, efficiency, and security are now inextricably linked to the deliberate co-design of hardware and software. Companies that master this vertical integration, like Apple with its M-series chips, demonstrate superior user experience and power efficiency, often outperforming competitors with theoretically more powerful, but less integrated, components. The data points towards a future where the seamless dance between silicon and code dictates market leadership, making deep architectural synergy the paramount factor in technological advancement.

What This Means For You

Understanding the dynamic tension between hardware and software fundamentally changes how you should approach technology:- Informed Purchase Decisions: Don't just look at raw specifications. Evaluate how well a device's hardware and software are known to integrate. A perfectly optimized mid-range phone might offer a better experience than a spec-heavy, poorly integrated flagship.

- Proactive Maintenance: Regular software and driver updates aren't optional; they're essential for maintaining optimal performance and security, directly impacting how efficiently your hardware operates.

- Smarter Troubleshooting: When devices slow down, consider both hardware limitations and software inefficiencies. Often, a software culprit (like a resource-hogging app or outdated driver) is easier to address than a hardware upgrade.

- Appreciation for Complexity: The smooth operation of your devices is a testament to an incredibly complex, constantly evolving negotiation between physical components and abstract code.

Frequently Asked Questions

What's the fundamental difference between hardware and software?

Hardware refers to the physical components of a computer system, like the CPU, memory, and storage. Software is the set of instructions and programs that tell the hardware what to do. Think of hardware as the body and software as the mind—they can't function meaningfully without each other.

Can hardware function without software?

No, not in any practical sense for modern computing. While hardware can exist as a physical entity, it requires at least low-level software (like firmware or BIOS/UEFI) to even boot up and perform basic tasks. Without an operating system and applications, it's just inert circuitry.

Why is good hardware-software integration important?

Optimal integration leads to better performance, higher energy efficiency, enhanced security, and a smoother user experience. When hardware is designed with specific software in mind, and vice-versa, the system can achieve tasks with fewer resources and greater speed, as seen with Apple's M1 chips achieving significant gains in 2020.

Are there examples of hardware and software designed together?

Absolutely. Apple's M-series chips, Google's Tensor processors, and Tesla's custom FSD (Full Self-Driving) chips are prime examples. These companies design their silicon specifically to accelerate their own software, leading to specialized performance gains in areas like machine learning and graphics processing.